Editor’s note: Because this issue is so crucial to our lives, I’ve broken the original post down into these six topics—each in its own post to allow for easier reading.

This is a huge topic. That’s why the original post was comprised of more than 7,000 and why I have broken it out into five separate posts. Even so, it didn’t cover nearly every aspect of the importance of AI to our future. My hope is to motivate readers to think about this, learn more, and have many conversations with their families and friends.

I’ve broken the original post down into these six topics—each in their own post. Feel free to jump around.

- An introduction to the opportunities and threats we face as we near the realization of human-level artificial intelligence

- What do we mean by intelligence, artificial or otherwise? (this post)

- The how, when, and what of AI

- AI and the bright future ahead

- AI and the bleak, darkness that could befall us

- What should we be doing about AI anyway?

I’m writing about artificial intelligence but that is too broad and needs to be further defined. We should start with what I mean by intelligence.

Intelligence: The ability to accomplish complex goals

I’ve come to use this definition put forth by Max Tegmark in his book Life 3.0: Being Human in the Age of Artificial Intelligence. It is both broad enough to include those activities shared by human and non-human animals as well as by computers and not so broad as to get into questions of consciousness or sentience³. Intelligence cannot be measured using one criteria or scored using a single metric like I.Q. because intelligence is not a single dimension. In the Wired article The Myth of a Superhuman AI, Kevin Kelly states “‘smarter than humans’ is a meaningless concept. Humans do not have general purpose minds, and neither will AIs.” There are cognitive activities at which certain animals perform better than us and some that machines do better than us. This is a good reminder that evolution isn’t a ladder and we’re not at the top of it. Evolution creates adaptations that are best suited for particular circumstances.

Artificial Narrow Intelligence (ANI): Specialized in accomplishing a single or narrow goal

This is the type of AI we are used to today. Google Search, Amazon Alexa, Apple Siri, nearly all airline ticketing systems, and countless additional products and services are using AI to identify people and objects in photos, translate language (written and spoken), master games (chess, Jeopardy, Go, and videogames), drive cars, and more. We come into contact with this type of AI every day and, for the most part, it doesn’t seem very magical. For the most part these sorts of AI can only do one thing well and they have emerged slowly during the past few decades. Many of these now seem mundane.

MarI/O is made of neural networks & genetic algorithms that have learned to kick butt at Super Mario World.

Artificial General Intelligence (AGI): As capable as a human in accomplishing goals across a wide spectrum of circumstances

You and I excel at various things, we can recognize patterns, combine competencies, solve problems, create art, and learn skills we don’t yet have. We use logic, creativity, empathy, and recall — each requiring a different sort of intelligence. We take this diversity and breadth of intelligence for granted but creating this type of intelligence artificially is the great challenge of our time and is what most people in the field of AI research are trying to accomplish — creating a computer that is as smart as us across many different dimensions — and crucially — one that can teach itself new things.

There’ve been many tests derived in order to ascertain when machine intelligences “become as smart as humans.” These range from the well-known Turing Test to the absurd Coffee Test. They all portend to assess a general capability that, among other things, involve the ability to reason, plan, solve problems, think abstractly, comprehend complex ideas, and learn quickly from experience. I’m not sure, however, that machines won’t be supremely impressive well short of passing these tests. I imagine stringing together hundreds or thousands of disparate ANI’s into something far more capable than any human in many ways. At that point, it may very well appear to us, to be super-human…

Artificial Super Intelligence (ASI): Better than any human at accomplishing any goal across virtually any situations

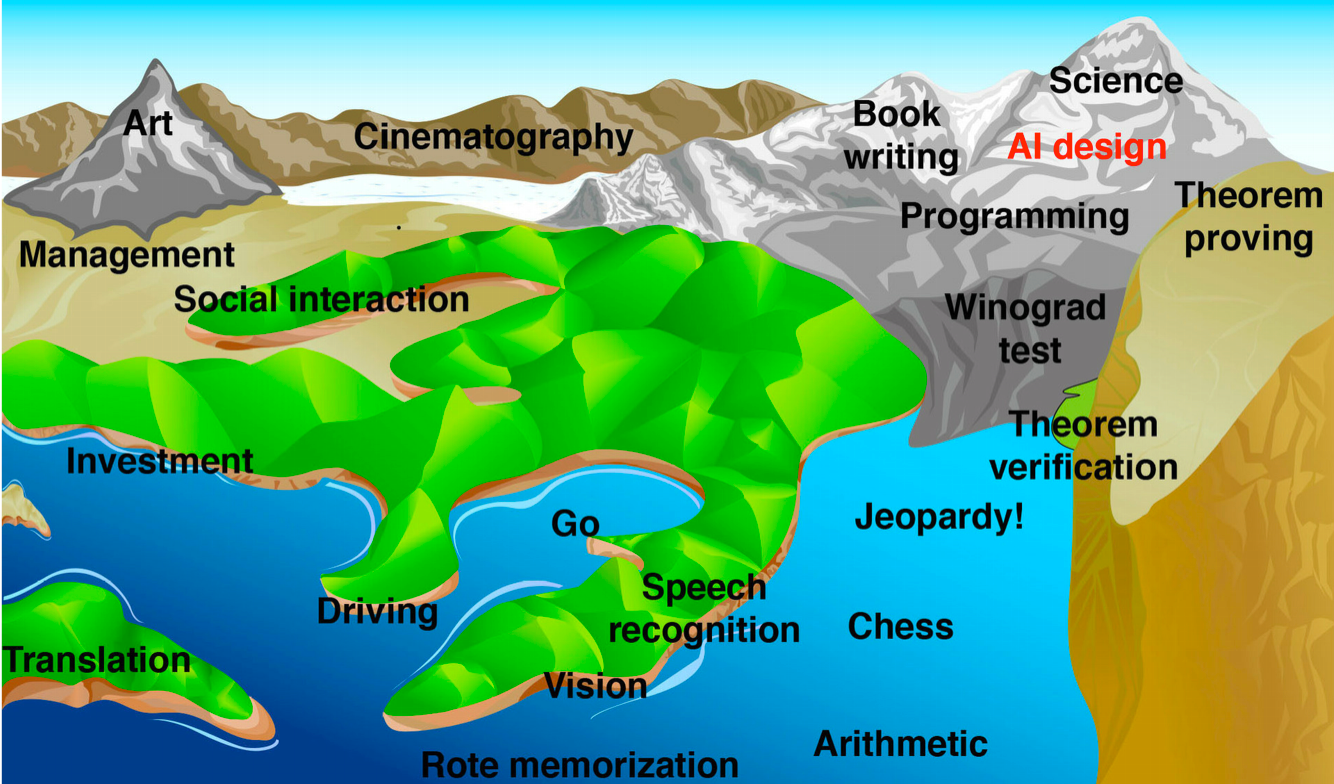

This is most often how people describe computers that are much smarter than humans across a wide range of intelligences. Some say all intelligences, but I’m not sure that is the threshold that need be passed. Of course, computers are already superhuman in some arenas. I’ve mentioned some specific games in which humans can no longer compete, but computers have been better than humans at other things for decades — things like mathematical computation, financial market strategy, memorization, and memory recall come to mind. But soon more and more of those competencies at which humans excel — things involving language translation, visual acuity, perception, and creativity — will be conquered by computers as the following illustration shows.

Other parts in this series:

Part One: An introduction to the opportunities and threats we face as we near the realization of human-level artificial intelligence

Part Three: The how, when, and what of AI

Part Four AI and the bright future ahead

Part Five: AI and the bleak, darkness that could befall us

Part Six: What should we be doing about AI anyway?

This is such an immense topic that I ended up digressing to explain things in greater detail or to provide additional examples and these bogged the post down. There are still some important things I wanted to share, so I have included those in a separate endnotes post.

Comments